Dear readers, With the launch of e-newsletter CUHK in Focus, CUHKUPDates has retired and this site will no longer be updated. To stay abreast of the University’s latest news, please go to https://focus.cuhk.edu.hk. Thank you.

The Butterfly Effect: Network Coding Trumps Routing

It is no coincidence that the Internet is often likened to an information superhighway, where information (measured in megabytes and increasingly in terabytes and petabytes) travels from one spot to another, stopping at nodes or intersections to the directions of routers which control traffic in the virtual sphere.

As with one made in concrete and steel, this information superhighway does not always offer a smooth ride. Like a real road with definite width and length, any information pathway or channel must have its maximum capacity. When two carriers (vehicles or packets of information) come to the same intersection, only one of them can pass at one time and the other has to wait. Thus a high volume of traffic means the highway gets jammed easily and frequently. So when you could not get on the web instantly to purchase your favourite concert or football tickets when they first go on sale, you know there are thousands if not millions of others who have got on the net to compete with you for the tickets.

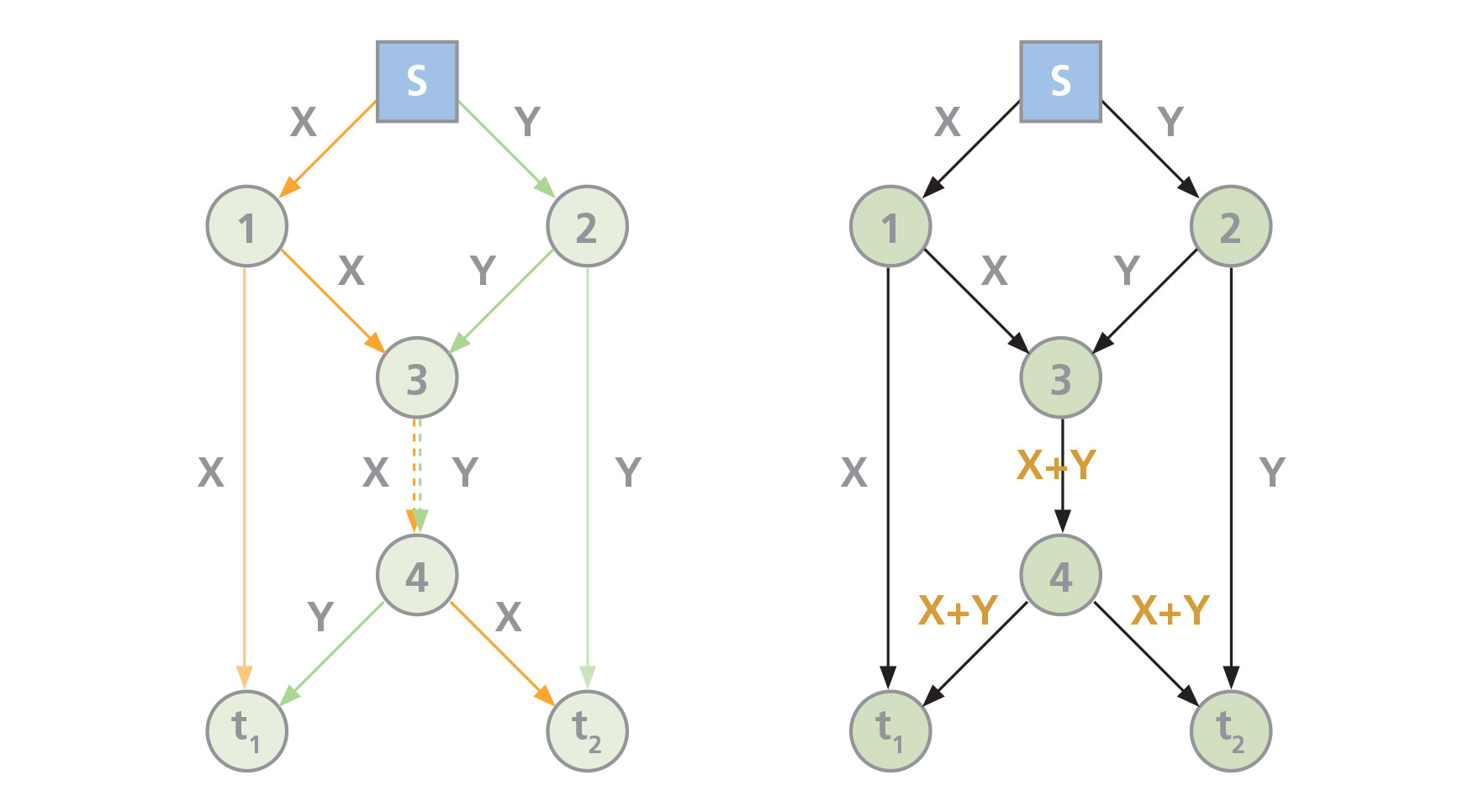

In today’s connected world, a vast population of users would simultaneously communicate through a shared network. The most obvious example is the Internet which is essentially a labyrinth of branching, intersecting and merging pathways. An elementary model of a multi-cast (one-to-many) communication network can be visualized in figure 1.

The messages X and Y originating from the source s are sent via different routes to their respective destinations t2 and t1. The numbered nodes (routers for our purpose) are where such messages get stored and forwarded. Each line represents a channel on which one bit of information can be sent at any particular moment (its channel capacity). We can thus visualize two paths taken by X and Y to travel to their respective destinations:

X: s—1—3—4—t2

Y: s—2—3—4—t1

It is apparent that a bottleneck occurs between nodes 3 and 4 where the channel capacity cannot allow the two bits of information X and Y to pass through at the same time.

In 2000, a group of information theorists including Prof. Raymond W. Yeung, now Choh-Ming Li Professor of Information Engineering at CUHK, and Prof. Bob Li, also of CUHK, proposed a new model of packeting and forwarding information in a communication network. They theorized that an intermediate node in the network does not necessarily have to pass on the incoming packets of information per se but instead can mix and encode their contents and forward functions of the packets to achieve maximum bandwidth utilization. Without going into technical details, the contents of the incoming packets can be conveniently encoded by taking their linear combinations.

To illustrate with the same model (figure 1), the two messages X and Y get coded at node 3 into one X+Y which, not of two bits but one bit only, is passed to node 4 and onwards to the destinations t2 and t1, respectively. Without exceeding the channel capacity and with no delay in transmission time, X and Y are able to complete their journey. At their destinations, the original messages can be reconstructed if sufficient clues or instructions have been given. A higher throughput is thereby achieved, as well as greater reliability because the risk of losing some packets of information and not being able to recover the same has been reduced. Internet browsers can expect fewer occasions of their computers not responding and needing to reload pictures and texts. In terms of transmission efficiency, coding clearly outperforms routing.

The new technique is known as network coding and such network has come to be known as the Butterfly Network. It has revolutionized the view of information as a static and immutable entity which gets passed from one place to another as an indivisible whole. According to Professor Yeung, ‘Information is not a commodity when it comes to network communications.’ The advantages of the network coding approach are, in the words of Yunnan Wu of Microsoft Research and Prof. Baochun Li of the University of Toronto, delivery efficiency, ease of management, and resilience to network dynamics.

Another example of a shared network of communication can be found in satellite broadcast, where signals from a ground transmitter are sent up to the orbiting satellite which then transmits the same signals to one or more receiving stations on the ground, as in figure 2. The transmitter is the source, the satellite the relay, and the receiving station the destination. Professor Yeung said that most satellite communications today are still based on the routing model. With so many signals crisscrossing in the sky, it is small wonder that logjams sometimes occur, particularly in the peak hours when information from the same source is sought by millions around the globe. But if the coding option is used instead and signals are coded by the satellite and sent back to the receivers (figure 2), a more efficient transmission would result. Viewers of live broadcasts of football will experience fewer interruptions due to ‘technical problems’.

Today, the effects of the Butterfly Network are felt far and wide. Network coding has been developed and applied in wireless communication, data storage, channel coding, computer networks, switching theory, and cryptography. Its theoretical implications have been pursued in mathematics, physics, and biology with groundbreaking results.

Professor Yeung is continuing his pioneering work in network coding at CUHK’s Institute of Network Coding which, co-founded and co-directed by himself and Prof. Bob Li, is one of the Areas of Excellence research schemes recognized by the funding authority of the government of Hong Kong. The institute currently conducts forefront research on the theory of network coding and its various applications on the Internet, wireless communications, information storage and security, and bioinformatics. Professor Yeung sees massive scope for development and application in view of the increasingly massive data involved in an increasingly complex and dynamic network environment. For example, the cloud computing concept is predicated on massive data storage and reliable and secure retrieval capability. Mobile applications, whether in gaming, audio-visual data streaming, or social chatting and conferencing, all depend on a smooth and fast many-to-many communication network with constantly changing sources and destinations.

What started over a decade ago as a bold mathematical reconceptualization has resulted in perhaps one of the most dazzling and far-reaching developments in telecommunication technology of the twenty-first century. Professor Yeung said, ‘It is sometimes said that the information age we live in was brought about by the third industrial revolution. I believe that network coding might be the steam engine that takes us to the next phase of the revolution.’

This article was originally published in No. 420, Newsletter in Jun 2013.