Dear readers, With the launch of e-newsletter CUHK in Focus, CUHKUPDates has retired and this site will no longer be updated. To stay abreast of the University’s latest news, please go to https://focus.cuhk.edu.hk. Thank you.

Into the Black Box

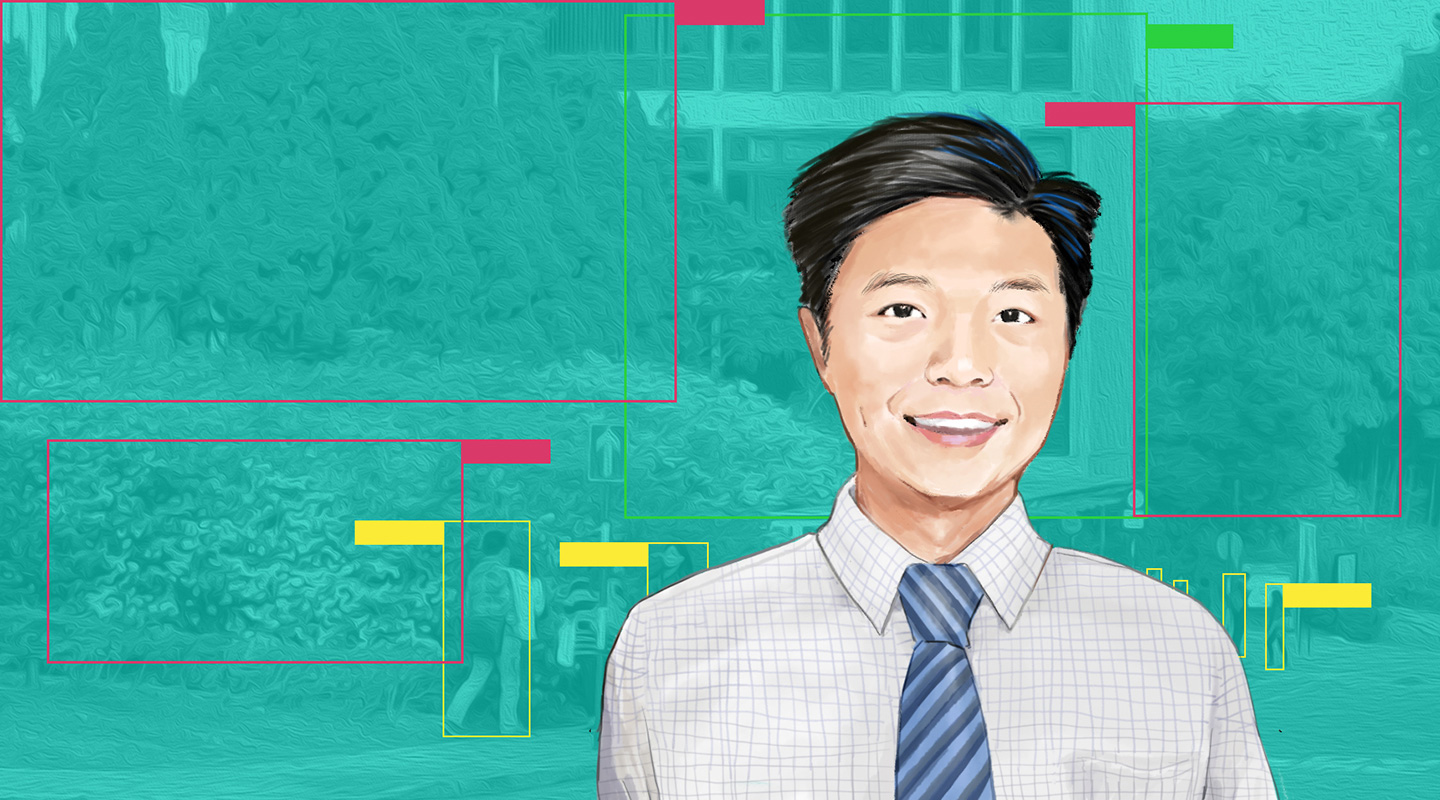

Zhou Bolei navigates the labyrinth of machine learning

As the pandemic rages on, I talked to Prof. Zhou Bolei online from home. I’m not a huge fan of online meetings, partly because of the way I look on webcams. But I’ve gotten less uncomfortable with them, thanks to a development in teleconferencing technology.

Previously, you’d need a green screen if you wanted to replace your background with a virtual one in a live video stream. With a recently developed video processing technology, you can do that without a green screen. Besides the much-discussed Zoom, the technology has been integrated into a wide range of commercially available online communication software. Now I could easily avoid getting caught on camera with a pile of laundry behind me, all with the click of a button.

This was a miracle, and it’s miraculous in every sense of the word. Indeed, not even the professionals have fully understood its inner workings. As it turned out, my interviewee has taken up the challenge to solve the mysteries surrounding it.

Professor Zhou is an artificial intelligence (AI) expert at the Department of Information Engineering. Specifically, he’s interested in an emerging subset of AI called machine learning.

‘Machine learning is about teaching a machine to learn the pattern of the data and understand it,’ Professor Zhou said.

To give a computer the ability to discern the background in a video and change it, we would have to tell it what constitutes a background. But since backgrounds come in all shapes and sizes, it would take an obscenely long time for a human to figure out the pattern they share and explain it to the computer.

This is where machine learning comes in. Instead of slowly trying to spoon-feed our computer with what it needs to know about backgrounds in order to detect them, we let it figure it out on its own using its superhuman processing power. All we do is give it a bunch of samples. Soon it’ll learn what it means to be a background and know what to look for in future.

‘The same sort of applications has been used in autonomous driving,’ Professor Zhou said, naming just one of machine learning’s many other uses. From covering up messy bedrooms to telling apart a road and a pavement, and from playing chess to making medical diagnoses, computers are acquiring new abilities every day through self-learning.

But in this brave new world is a beast lurking in the dark, and it’s becoming more and more of a threat as computers are becoming ubiquitous: we don’t know what exactly they have learnt to be in a position to run our lives.

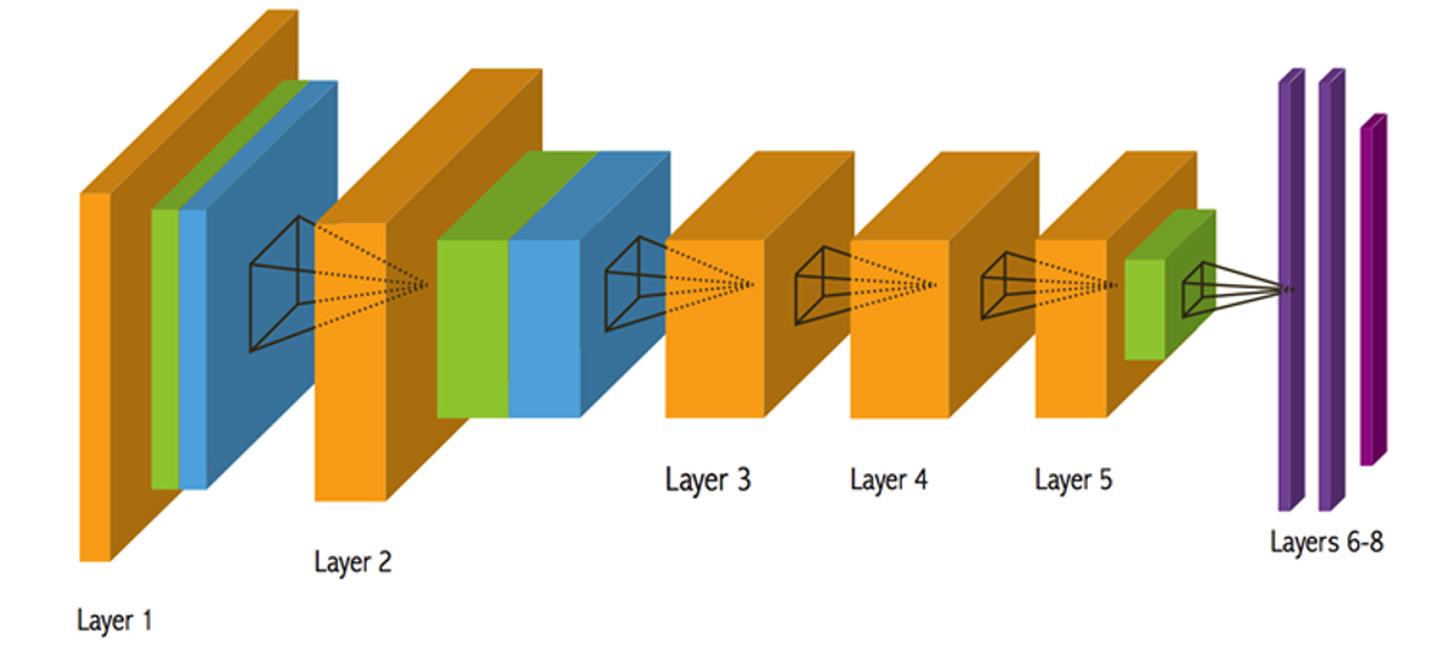

‘Given that there’re so many parameters and operators, it’s really hard to understand what’s going on in there,’ Professor Zhou explained, pointing to the processes by which computers learn, where millions of samples are digested in a multi-layered system called a neural network. These processes are so complex that they’re known as ‘black boxes’ even to the programmers, whose job is really just to build a stage for the computer to work its magic.

This poses serious problems. What computers learn informs their decisions, and these black boxes prevent us from inspecting that knowledge. Because of them, we can’t see what makes a computer misdiagnose a patient with cancer or why a self-driving car runs into a person. Also, they lock away valuable insights gathered by the computer.

‘The art of Go, which has taken humans two thousand years to develop, can be mastered by a computer in one week,’ Professor Zhou said, noting that computers have already outperformed humans with programmes like AlphaGo. ‘We can learn from their strategies.’

In view of these problems, Professor Zhou wishes to see through the black box. After receiving a master’s degree in information engineering here at CUHK, he did a PhD at the Massachusetts Institute of Technology. There he developed with his team a technique called class activation mapping (CAM).

As we’ve seen, computers can now identify the background in any video having looked at some samples. However, we had no way of knowing exactly what they’ve inferred from the samples to be able to do that. What CAM can do is highlight in a heatmap the features they focused on when identifying the background, hence revealing their understanding of what backgrounds generally are.

‘People use this technique to diagnose a computer’s mistakes,’ Professor Zhou noted. Researchers at Stanford University have used CAM to see exactly what it is in a chest radiograph that leads a computer to rightly or wrongly detect pneumonia.

As a PhD student, Professor Zhou was also involved in developing a technique called network dissection. Using this technique, he and his colleagues discovered that much like our brains, computers learn about the nature of a place by focusing on particular objects in the samples.

When we humans learn what a bedroom is, for instance, it is by taking note of specific objects like the bed and the pillow in the sample. Surprisingly, computers do the same.

‘We’ve only told our computers that the samples represent, say, a living room or the streets. We didn’t mark out the objects in the scene,’ Professor Zhou said. Rather than seeing just a muddle of pixels, computers have spontaneously developed the ability to discern discrete objects from their contexts without us humans ever having to draw their attention to them.

‘The objects just emerged in their vision,’ Professor Zhou remarked, still amazed by what computers have on their own come to perceive.

Since returning to CUHK in 2018, he’s been working to unlock other mysteries of machine learning. Having focused on how computers learn to read images, he turned his attention to how they learn to generate one, specifically portraits.

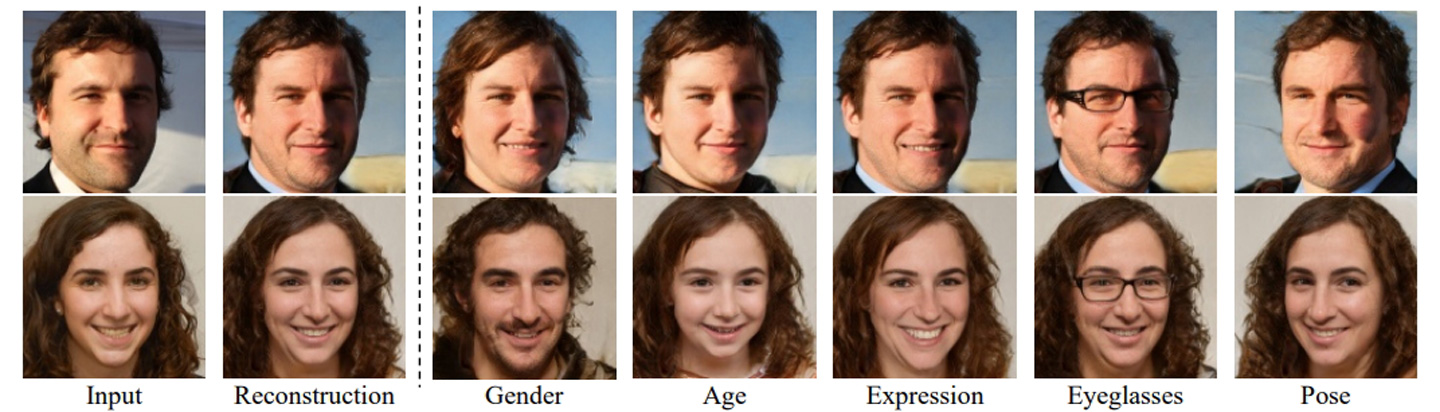

Just as it learns to interpret an image, a computer learns to make one by taking in samples and figuring out their underlying patterns. Along with his graduate student Shen Yujun and colleague Prof. Tang Xiaoou, Professor Zhou discovered that when computers study faces so as to paint one, they can tease out specifics like their age and gender.

This discovery has recently opened up an approach to digital image editing known as InterFaceGAN. We now know that by the time a computer is done looking at the average human face, it will not have only formed an overall impression of it but also a detailed understanding of its smaller, individual features. It’s based on its conceptions of the specifics that the computer creates new faces.

We can take advantage of this and get the computer to generate, say, photos of ourselves looking younger than we actually do. We simply need to manipulate the computer’s base assumption about the way age manifests itself in the human face, such that it would yield faces that look younger for their age.

For his attempts to understand how machines learn, Professor Zhou was named one of this year’s 20 innovators under 35 in Asia Pacific by the MIT Technology Review. But he and other researchers haven’t yet cracked all the mysteries there are, and they still have a long way to go.

‘One direction is to make computers more interpretable to begin with,’ Professor Zhou looked ahead. This will be a major shift from the more passive approach currently taken, which is to leave aside the question of interpretability at design stage and only try to understand in retrospect how the computer learns.

He’s also thinking outside of his discipline. Connecting the digital and the physical worlds, he’s collaborating with mechanical engineers and making robots more reliable by fitting them with more predictable computers. He’s partnering with medical scientists too to maximize computers’ potential to save lives.

‘CUHK has a very strong team in machine learning. It also has a very strong medical school. Hopefully, we can extend our work on AI interpretability into the healthcare sector,’ he said, noting the use of machine learning in analysing medical records.

‘We hope it can benefit humans. That’ll be the real deal.’

Jason Yuen