Dear readers, With the launch of e-newsletter CUHK in Focus, CUHKUPDates has retired and this site will no longer be updated. To stay abreast of the University’s latest news, please go to https://focus.cuhk.edu.hk. Thank you.

Go On, Little Nightingale

What AI does for art—and what it doesn’t

The growing use of AI in the arts, that seeming last stronghold of human excellence in the age of machines, is to some as disquieting as it is astounding. But how much ground can AI art really make? In the third installment of our series on AI, we explore the potential and limits of AI art.

In 1988, Katsuhiro Otomo released the first US edition of his manga masterpiece, Akira. It had always been problematic to get the traditionally black-and-white Japanese comics into the Western world, which preferred colours. With this culture gap in mind, as the American artist Steve Oliff recalls, Otomo decided to publish his work in colour for American readers, and Oliff was given the job to colourize it, panel by panel. As one can imagine, it was an exceedingly arduous task. For this reason, Akira has been one of the very few manga works that come in both a black-and-white and a colour version.

This is where Prof. Wong Tien-tsin has found yet another use for AI.

PROFESSOR WONG IS A LONG-TIME RESEARCHER of computer graphics at the Department of Computer Science and Engineering. While computer graphics have been around for half a century and used with much success in films and video games, the focus had for decades been on photorealistic rendering, that is the creation of 3D objects, texture and light as they would look in nature. In the 2000s, though, the field saw a major breakthrough—thanks to our fellow gamers. Ever-rising expectations of gaming experience meant better and better graphics processing units (GPUs), which scientists and engineers were quick to exploit. One result was the dawning of computer vision and, consequently, rendering that goes beyond imitations of the real world.

‘When we say a computer “sees” or “understands” an image, it means it has extracted information from the image, like its stylistic features, which it should then be able to work into another image,’ Professor Wong explained. If we show the computer a painting, then, it should be able to generate something akin to human art—something expressive. An attempt at this is AI Gahaku, a web application that took the Internet by storm last year with its promise of turning any photo into a classical painting in the style of the user’s choice. What it does is exactly to study existing paintings from different schools and periods and rework the photo based on what it has learnt about the chosen style. And as its name suggests, this feat is made possible by AI, which could only have run on the powerful GPUs that we have been blessed with over the past two decades.

Having both the intelligence and the hardware, computers are ready for a bigger role in making art, especially manga. ‘It’s such a labour-intensive craft,’ said Professor Wong of manga production. ‘Everything from the writing to the visuals will have to be taken care of by the artist and, if they are lucky, a few apprentices, oftentimes by hand.’ Automation is theoretically possible without AI, but then for each task there will have to be a tailor-made algorithm, and each algorithm will involve a number of parameters that must be manually tuned. With AI, which can learn its own parameters and adapt to different problems of a similar nature, parts of the labour can finally be delegated to machines, one example being precisely colourizing black-and-white comics.

‘There was a semiautomatic approach, where the artist would throw in dashes of colour and the computer would build on that. Then came our AI-based solution,’ said Professor Wong. Their model involves two stages in its initial form. At first, the computer is trained to recognize the black-and-white texture and remove them, leaving only the outlines; at the second stage, it learns what colours and shades are normally used in different situations, say for human skin and hair, and fill the outlines accordingly. In a later iteration, the model performs the task in one go. The artist can provide the model with hints for better results, but even without human guidance, its performance is still quite acceptable.

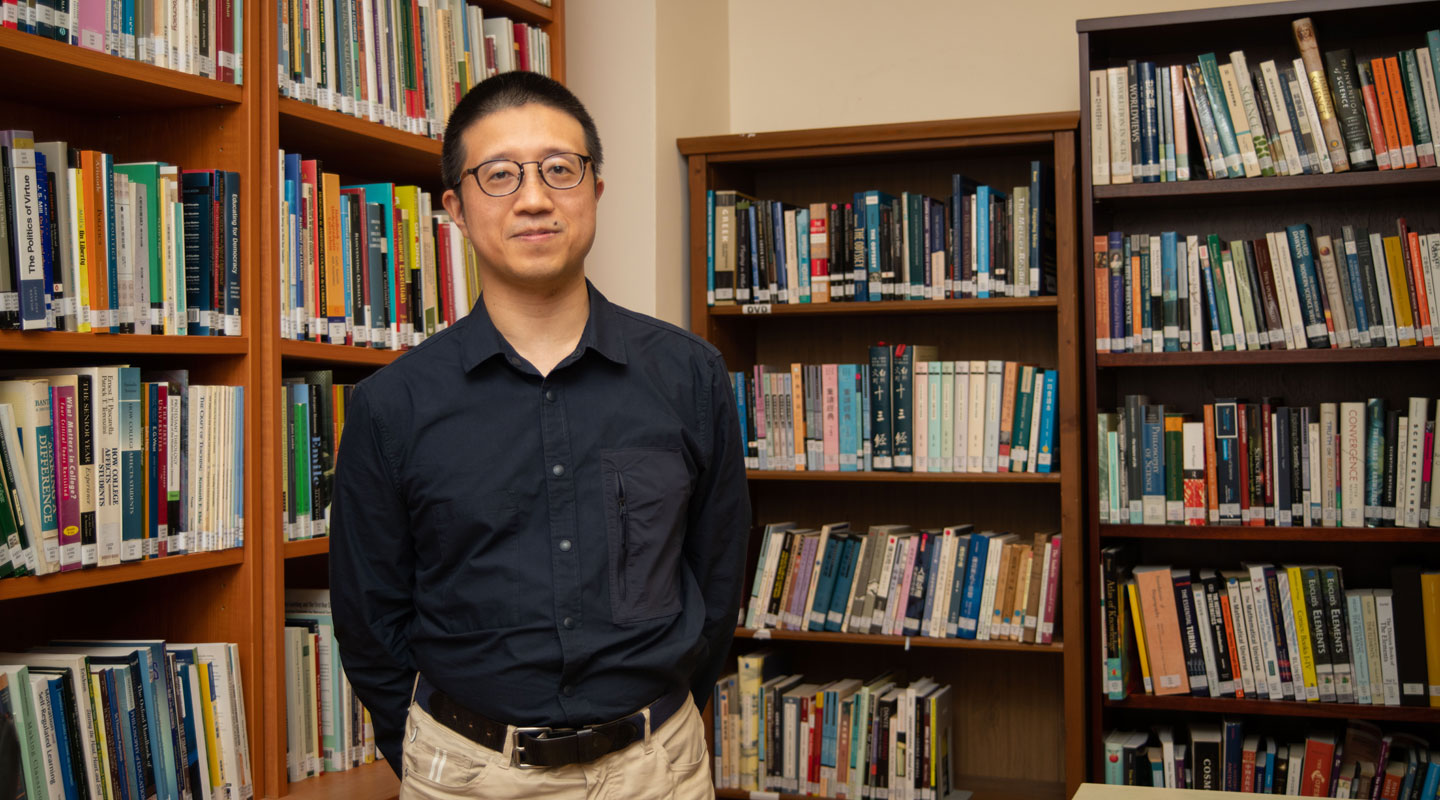

IN MUSIC, THE USE OF AI HAS ALSO FLOURISHED. As it in visual art, the dream of automation in music has a long history.

‘Attempts at having computers make sounds began as early as the invention of computers,’ said Dr. Chau Chuck-jee of the Department of Computer Science and Engineering, who teaches the first undergraduate course on computer music at CUHK, and himself studied music here alongside computer engineering. In 1951, Alan Turing’s Ferranti Mark 1 played a snippet of God Save the King for a BBC recording crew, making it the first piece of computer-performed music on tape. In the decades that followed, researchers moved on to exploring ways of making computers write music. Earlier proposals include knowledge-based systems, where experts essentially taught the computer music theory. But as we have seen, writing out the rules are as taxing as it is for us to compose music entirely by hand, if not more. Also, these programs lack general applicability. Thus came the more robust solution of machine learning.

‘With better hardware after the AI winter at the end of the last century, there was renewed interest in machine-learning approaches to computer music,’ said Dr. Chau. Many of these approaches involve what is known as a neural network, a system modelled after the human brain. Given a large enough database—one that covers, say, the entire Western classical music canon—the network can work out using statistics the norms of music composition or the recurring features in the works of a particular composer. Using this knowledge, it can make new music or imitate a certain composer. Programs employing this technique include AIVA, a Luxembourg-developed virtual composer registered at SACEM, the French association of music writers, and granted copyrights. Another example is DeepBach, a model that writes chorales in the style of Bach given the soprano part.

‘The researchers brought in a number of people with different levels of musical knowledge and played a series of compositions by DeepBach. On average, around half of them thought that they were really by Bach,’ said Dr. Szeto Wai-man of the General Education Foundation Programme, who also specializes in computer music and has been involved in promoting public understanding of AI. As Dr. Szeto reported, the model does occasionally deviate from the norms of music and Bach’s style, and experts can easily differentiate one of its works from an actual Bach. For casual listeners, though, it does the trick.

‘They’re like the student who usually follows the rules and gets the answers right,’ said Dr. Chau of the plethora of AI composers debuting recently. ‘But is that good music?’

ON THE MOST BASIC LEVEL, ART CAN BE QUITE SIMPLE. It is the right colour in the right place, the right note at the right time, and these are things that machines have done reasonably well, being now somewhat able to figure out for themselves what the norm says about being right. But art is also about breaking the norm, and that breakaway must be for a good reason. This transformative aspect of art has been achievable only by living creatures, chiefly humans. With AI, can machines catch up with us in this respect?

‘I won’t rule out that possibility, but it’s not going to be easy,’ said Dr. Szeto. Making it new, to borrow a phrase from the avant-garde American poet Ezra Pound, is not in itself difficult. Back in the 18th century, composers would mix and match pieces of music in sequences determined by a die in a game called the Musikalisches Würfelspiel—and thus was born a new composition. In theory, computers can do the same with randomness added into their otherwise norm-conforming models. But while innovations are made this way, they are all driven by chance, not by some aesthetic motivation. This is where AI comes to a dead end.

‘Computers do not have motivations. That’s the current state of things,’ said Dr. Chau. For them to get closer to being able to make aesthetic decisions, as Professor Wong noted, we might conduct surveys where humans would rate their works and let them learn what it is that appeals to us. But then of course, it will not be the computer’s own judgment but ours, and what one portion of humanity enjoys is not necessarily enjoyable to another. Furthermore, we will remember that beauty often transcends its time.

‘Stravinsky’s Rite of Spring was so poorly received at its premiere that there was literally a riot. Now it’s a classic,’ said Dr. Szeto. ‘It’s unimaginable for a computer to have the same insight as Stravinsky, to know a work is valuable despite how unpalatable it is to the current taste.’

Ultimately, for machines to be able to make aesthetic judgements the ways humans do—beyond learning what humans find appealing—is a question of whether they can feel or consciously experience the emotions aroused by an artwork. That will require, of course, consciousness, and it is unlikely the machines will come with one for as long as we, their architects, lack an understanding of how our own consciousness came to be, let alone how it might be recreated for computers.

‘AI can have knowledge about emotions the way people who never like heavy metal still know it’s exciting, but knowing an emotion is not the same as feeling it,’ Dr. Szeto explained. We all know heavy metal is exciting by virtue of its loudness, tempo and some of its other objectively definable features, but to feel the excitement is to also register that indescribable, visceral rush. This is what AI misses, and this limited grasp of emotions is why computers still need human intervention when it comes to, say, colourizing manga.

‘Our model will need a guide if there’s an uncommon colour that the human artist wants to use to express a certain emotion,’ said Professor Wong. For instance, if the artist wants the normally blue sky to be painted red for a sense of menace, they will have to intervene and give the model a palette of different shades of red. ‘There are ongoing efforts to make machines extract emotions from a drawing. If they do end up being able to learn what the emotions of a drawing are while knowing what colours normally express those emotions, they might be able to do without a guide. But it’s tough to say how successful it will be, given how hard it is to describe emotions mathematically with all their subtleties.’

‘A THING ABOUT PUBLIC UNDERSTANDING of AI is how extreme it tends to get. People like thinking of AI as either inept or godlike,’ said Dr. Szeto. Two years ago, the Chinese tech conglomerate Huawei caused a stir on the Internet when one of their AI programs had completed the melodies of the entire missing third and fourth movements of Schubert’s unfinished Symphony No. 8. But commentators soon pointed out that the attempt is a far cry from Schubert’s style, and as vast a project as it might be, it was still just the melodies, which could not have worked without being arranged into a full orchestra score and performed by humans. Beyond sensationalism and a blanket dismissal, how can we make sense of AI’s place in the world of art?

‘It’s more than just an intellectual exercise. We’re really trying to provide the industry with tools they can utilize,’ said Professor Wong of his research, which has also led to a way of automatically converting colour photographs into manga drawings, much to the artists’ convenience. He remembers being approached by a comics publisher trying to remove all the speech bubbles in a work they were digitizing and fill in the gaps. That was 10 years ago, and an automated solution was not available then. The publisher ended up outsourcing the task to Vietnam, spending a whopping 40% of their budget on it alone. Had they come to him 10 years later, the professor joked, the story would have been a lot different, now that his team does have a solution, driven by none other than AI.

‘They may not work a hundred per cent of the time, but with a bit of tweaking by the human user, they do work quite neatly and help cut costs significantly.’

It is the same story with music. With all the virtual composers out there, video producers who are looking for just a reasonably fair piece of music to go with their content can save a good few dollars and time, now that they do not have to hire a human composer. Similarly, game developers can now bring AI composers into their works, providing personalized, non-repeating ambient music for as long as the gameplay might last. Even to the music producers themselves, AI can be immensely helpful, given how much the industry has compartmentalized. Whereas professional arrangers depended on the composers to give them something to work with, they can now hone their skills with melodies produced by machines.

‘AI can empower non-experts by allowing them to quickly achieve things beyond their remits and focus on their specialties,’ said Dr. Chau.

We have seen that for a computer to write music, it will first need the ability to understand the rules and norms of music. This opens up possibilities for AI to be used in music education, where it can advise students on what makes a composition or performance right and acceptable. It is even possible for AI to help music researchers with the insights it has gathered through studying countless compositions and performances.

‘With its ability to analyze music and, indeed, other forms of art en masse, AI can help scholars gain a bird’s-eye view of a particular style and better understand how humans make art in the first place,’ said Dr. Szeto, gesturing towards possible new directions for the emerging field of digital humanities.

WITH ALL THAT HAS BEEN SAID ABOUT AI AND THE ARTS, perhaps no one has said it better than Hans Christian Andersen in his 1843 fairy tale ‘The Nightingale’, proving again that art is often ahead of its time. The story begins with a Chinese emperor bringing in a nightingale to sing for him, only to then desert it for a mechanical songbird that knows no rest. When this new favourite of his, singing nothing but a monotonous waltz day after day, goes unresponsive because no one is there to wind it up, the emperor regrets having abandoned the nightingale and valued the sounds of a machine above those of a living being. He is right to finally recognize nature’s superiority in art as we should too. But as the nightingale says in defence of its surrogate, the machine has done well for what it was confined to doing.

‘On their own, machines may not be able to make great art. They’re ultimately tools that serve at the pleasure of the human artist or, more accurately, curator,’ said Dr. Chau, affirming the need for humans in art in the age of AI to provide the direction. ‘Within those limits, though, machines do work miracles.’

By jasonyuen@cuhkcontents

Photos by gloriang@cuhkimages, ponyleung@cuhkimages and amytam@cuhkimages

Illustration by amytam@cuhkimages